I had a great time speaking at ODSC East this week. I am grateful for a fantastic and engaged audience. It was wonderful to hear that many found my talk helpful. Hopefully it can be of even more help here–

Author: Kerstin Frailey

Introducing jupyckage: Create Python Packages from Notebooks in One Line of Code

If you work in jupyter often, you probably find yourself sharing code between notebooks. Whether it’s creating plots, processing data, or running pipelines, replication is a part of data science. If you’re aiming to keep DRY (don’t repeat yourself), keep your code in sync across notebooks, or ease the transition from dev towards reusable code–jupyckage can help. It’s a little package that makes a big workflow upgrade.

TLDR;

If you want to convert your jupyter notebook to a python package,

pip install jupyckage

import jupyckage.jupyckage as jp

jp.notebook_to_package("<notebook_name.ipynb>")

OR

jupyckage --nb <notebook_name.ipynb>

will allow you to import your notebook as

import notebooks.src.<notebook_name>.<notebook_name>

Existing Options and Why I Don’t love Them

Execute One Notebook Inside Another Notebook

You could use some magic to import that code %run notebook_name.ipynb, but that’ll execute your notebook which, in data science, is often note desirable. Here are two reasons, in addition to the ones I’ll give for nbconvert:

- If your notebook trains a model, downloads data, saves a file, or executes any long processes, you probably don’t want to run your notebook just to access its functions.

- It requires

%magic which is great in notebooks, but not useful otherwise.

I’ve rarely been in a situation in which this was a viable option.

NBConvert and Import

You could use nbconvert to create a python executable and then import that file. I don’t love this solution because

- It creates a mess in my directory that I inevitably have to cleanup. As soon as I do clean it up–ie. organize the modules into directories–I have to do the work of creating a package anyways (ie

__init__.pyfiles and appropriate structure) in order to import the modules. - Usually I’m working towards reusable code, which means I’m going to have to create a package from the modules anyways. nbconvert doesn’t help with this.

- It either must be done in the terminal, which interrupts workflow, or requires bang (

!) magic, which is great while working in a notebook but not great when you move past it. - Everything in my notebook is converted to the module. Which means I have to be “done” or “done-ish” with that notebook to convert it.

These were big enough problems for long enough that I finally pulled together a simple solution.

A New, Slicker Solution: jupyckage

jupyckage creates (local) python packages from notebooks in one line of code.

Pip Install

The package is up on pip — just pip install jupyckage.

Create and Import the Package

To create a package from your notebook, simply run

notebook_to_package("<notebook_name.ipynb>")

Or you can run this via terminal

jupyckage --nb <notebook_name.ipyb>

Either will create a local package for you, which you can import as

import notebooks.src.<notebook_name>.<notebook_name>

Yes, the import statement is long, but it should tab complete for you (either in jupyter or an IDE).

Also, your notebook name will be reformatted as all lower case and any spaces replaced with _. But–if your notebook name contains any disallowed characters, there will be an error.

I recommend importing the package as something convenient, e.g. as abv where abv is your chosen abbreviation. As usual, you can access the functions and objects defined in your notebook via abv.<your_function>() and abv.<your_object>.

You Can Leave Stuff Out of the Module

Nice! If you are still working in your notebook you probably don’t want all of it accessible in a module. You can add a MD cell with the contents

# DO NOT ADD BELOW TO SCRIPT

and nothing below will be added to the module.

Directories and Files Created

You’ll also notice the the below file structure has been created.

notebooks/

├── src/

│ └── <notebook_name>/

│ ├── __init__.py

│ └── <notebook_name>.py

└──bin/

└── <notebook_name>.py #executable

Create a Package from a Collection of Notebooks

If you want to convert other notebooks in the same directory, they’ll show up alongside your first one.

notebooks/

├── src/

│ ├── <notebook_name>/

│ │ ├── __init__.py

│ │ └── <notebook_name>.py

│ ├── <notebook_name2>/

│ │ ├── __init__.py

│ │ └── <notebook_name2>.py

│ └── <notebook_name3>/

│ ├── __init__.py

│ └── <notebook_name3>.py

└── bin/

└── <notebook_name>.py

└── <notebook_name2>.py

└── <notebook_name3>.py

More Info

If you want to learn more, request a feature, report a bug, or contribute–

- pypi https://pypi.org/project/jupyckage/0.1.2/

- code https://github.com/kefrailey/jupyckage

- issues https://github.com/kefrailey/jupyckage/issues

Thanks for reading. Hope this helps and have a great day!

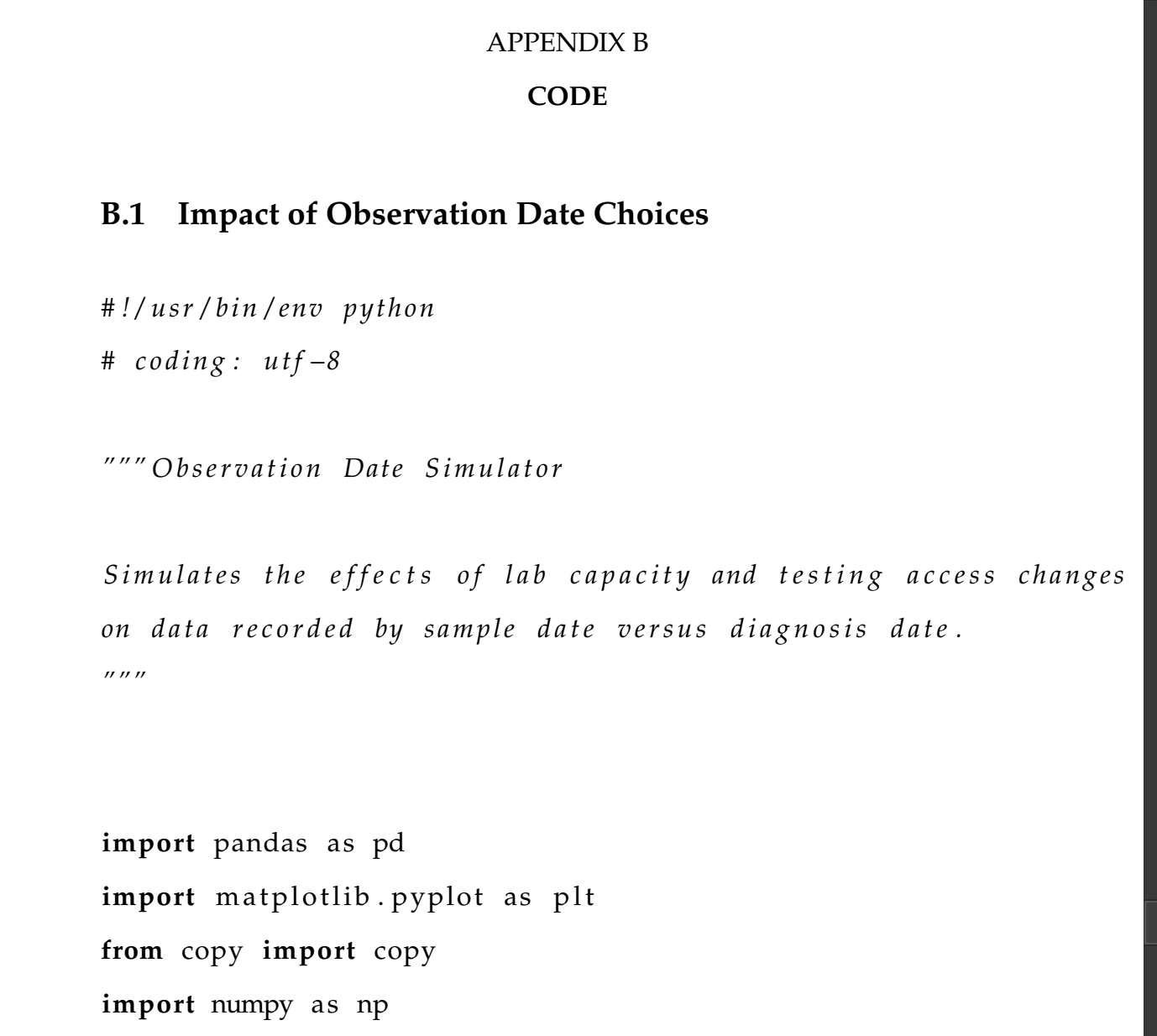

Quick Little Hack: Camera-Ready Scripts and LaTex From Jupyter Notebooks

I’m rounding that corner of my research where the end seems to be in sight–which also means that properly formatting my work for publication can’t be put off too much longer. Depending what I’m coding, I’m either in PyCharm, Sublime, or Jupyter. My dissertation is, of course, in LaTex(…as well on my whiteboard, scattered printer paper, post its, long forgotten notebooks, and the margins of so many journal papers). If my work is already in a script, then getting it to LaTex is a snap. But if it’s written in Jupyter, it needs a little love before it’s fit for prime-time.

From Script to Latex

There’s a nice little package for LaTex that imports your python script (your_script.py) and presents it neatly

\usepackage{listings}

and you can include your script via

\lstinputlisting[language=Python]{path/to/your_script.py}

It will appear nicely formatted with appropriate bolding, etc.

That’s great if your scripts already looks nice. And sure, some of mine do. But I’ve built a few simulations in Jupyter notebook.

From Jupyter Notebook to Script

There is a convenient means to create a python executable from your notebook. You can run this in your terminal

jupyter nbconvert --to script path/to/your_notebook.ipynb

This will produce a your_notebook.py executable. It’s fine if you just want to run it, but it’s not all that great to look at. It includes cell numbers, awkward spacing, and just looks a bit messy. It’s not what I want in my dissertation. And I’m certainly not going to open up each file and tidy them up manually–especially not every time I tweak and edit my code. I know I will eventually forget to export and tidy the updated script. So, let’s just make it mindless.

From Jupyter Notebook to Script-Fit-For-Latex

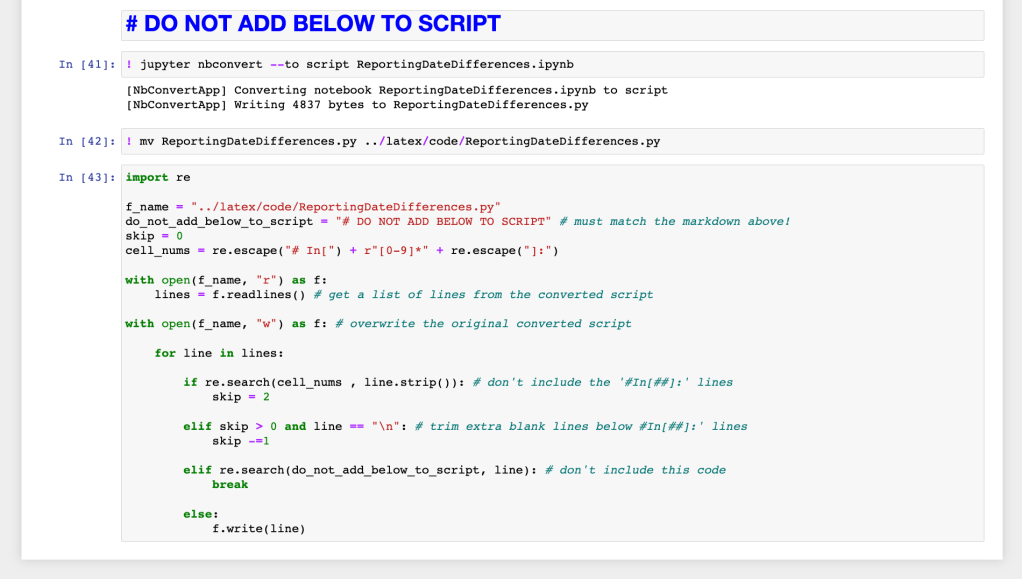

Using a little magic(!) and a bit of processing, I added these lines to the end of my notebooks. (Don’t worry–I’ve also added the code below for copy-paste purposes.)

NOTE: The first cell is markdown (the rest are python cells):

# DO NOT ADD BELOW TO SCRIPT

The next two cells are magic-ed (the ‘!’ at the beginning). The first converts this_notebook.ipynb (the actual name of the notebook you’re currently in) to this_notebook.py

! jupyter nbconvert --to script this_notebook.ipynb

The next cell is optional, but there’s a good chance you want it for organizational purposes (especially if, as I recommend, you are versioning your work via github/gitlab/etc). Also, if your notebook takes a long time to run, consider copying (cp) instead of moving (mv) your file. This will come up at the end.

! mv this_notebook.py your/desired/location/this_notebook.py

The next cell will rewrite your script in place, but leave out the extraneous stuff. NOTE: you will have to update f_name

import re

f_name = "your/desired/location/this_notebook.py"

do_not_add_below_to_script = "# DO NOT ADD BELOW TO SCRIPT" # must match the markdown above!

skip = 0

cell_nums = re.escape("# In[") + r"[0-9]*" + re.escape("]:")

with open(f_name, "r") as f:

lines = f.readlines() # get a list of lines from the converted script

with open(f_name, "w") as f: # overwrite the original converted script

for line in lines:

if re.search(cell_nums , line.strip()): # don't include the '#In[##]:' lines

skip = 2

elif skip > 0 and line == "\n": # trim extra blank lines below #In[##]:' lines

skip -=1

elif re.search(do_not_add_below_to_script, line): # don't include this code

break

else:

f.write(line)

Make sure to update you LaTex path as well

\lstinputlisting[language=Python]{your/desired/location/this_notebook.py}

If the Script isn’t updating… Don’t Worry!!!

It just means nb convert is looking at a previous version (annoying, I know). In Jupyter Notebook, just go to File –> Save & Checkpoint. Then rerun this code.

If LaTex isn’t updating… Don’t Worry!!!

LaTex if finicky. We all know that. Fortunately, when an imported file isn’t updating the fix is usually the same.

Delete the your/desired/location/this_notebook.py file. Rebuild your LaTex file so that you get an error (or just delete the appropriate build file). Then rerun the code I’ve shown here — but if you chose just to copy your file, run only that cell and below.

Hope this helps! I look forward to seeing your beautiful code in a publication somewhere soon!

Oldies But Goodies: “On AI ROI: The Questions You Need To Be Asking”

As promised in my previous post, here’s a complimentary talk to that one.

Giving this talk was particularly memorable because I was back in the Bay Area, back where I got my data science-in-business start, and back with so many of my first data colleagues. After learning so much from the data community in NorCal, it was a joy to bring them something in return.

To be honest, I was a bit worried that the audience would find this very practical, very look-behind-the-curtain talk too irreverent to the myth of the all-magical, all-fixing data science. But I was delighted to find the talk very well received. In the midst of the data science hype, a bit of bubble bursting and some guidelines for practical oversight seemed to be just what was needed.

Oldie But Goodie: “Building an Effective Project Portfolio”

I’m excited to be getting back into giving talks in person however we’re giving talks these days. But as I’ve gotten back in the circuit I’ve also gotten nostalgic for meeting and mingling with my colleagues.

ODSC / Ai X (formerly Accelerate AI) has always been one of my favorite conferences for its incredible data community. Since I can’t relive the engaging post-talk conversations and coffee breaks, I thought I could at least post the decks.

I gave my first ODSC/Ai X talk in Boston during the spring of 2019. That’s what’s below. The next post will feature a deck from the other coast and the opposite season–but on a complimentary topic.

Looking forward to seeing some of you–though probably only through zoom–sometime soon!

“Chasing Impact” Talk at the GET Cities Kickoff Summit

A few weeks ago (January 23rd, 2021 to be precise) I had the pleasure of joining GET Cities for their inaugural Kickoff Summit! GET Cities is an incredible fellowship program building a more inclusive future for tech by fostering community and accelerating growth in underrepresented genders. If you’re looking for the up-and-coming movers and shakers, look no further than GET Cities.

I was asked to speak about technical project development and building successful products. I very happily obliged. Hope you enjoy the deck!

I’m Back! & I’ve Been Featured!

It took a while, but I’ve got my site back! I’m excited to backfill some posts I’ve been trying to put up

and I’m so grateful to be featured alongside some outstanding AI professionals! Link

More content to–quickly!–come!

Why & When: Cross Validation in Practice

Nearly every time I lead a machine learning course, it becomes clear that there is a fundamental acceptance that cross validation should be done…and almost no understanding as to why it should be done—or how it is actually done in a real-world workflow. Finally I’ve decided to move my answers from the white board to the blog post. Hope this helps!

Cross validation is like standing in a line in San Francisco: Everyone’s doing it, so everybody does it, and if you ask someone about it, chances are they don’t know why they’re doing it.

Fortunately for those who might be doing so without fully knowing why, there is very good reason to cross validate (which is generally not true about joining any random Bay Area queue).

So, other than our now inbred inclination as data scientists to do the thing that everyone else is doing…why should we cross validate?

The Why & the When

To discuss why we perform cross-validation, it’s easiest to review how and when we incorporate cross validation into a data science workflow.

To set the stage, let’s pretend we’re not doing a GridSearchCV. Just good old fashioned cross validation.

And, let’s forget about time. Time of observation usually matters quite a bit…and that complicates things…quite a bit. For right now let’s pretend time of observation doesn’t matter.

First Partition of Data

Divide your data into a holdout set (maybe you prefer to call it a testing set?) and the rest of your data.

What’s a good practice here? If you’re data isn’t too big, import your entire_dataset, randomly sample [your chosen holdout percent]% of observation ids (or row indices). Create two new data frames, holdout_data and rest_of_data. Clear your variable entire_dataset. Export holdout_data to the file or storage type of your convenience, and clear your variable holdout_data.

If your data is too big, adapt a similar workflow by using indices instead of data frames and only loading data when necessary.

To clear a variable in python, you’re welcome to del your variable or set it to Null, as you’d like. Just make sure you can’t access the data by accident.

EDA & Feature Engineering

Let’s assume we then follow good practices around exploratory data analysis and creating a reusable feature engineering pipeline.

Notice that we’re only using the rest_of_data for EDA and designing our feature engineering pipeline. Let’s think about this for a second. Only doing this on the rest_of_data allows us to test the entire modeling process when we test on the holdout data. Clever, right?

Now, we’ve got our data ready to go and we have an automated way to process new data, via our pipeline.

Specify a Model

We need to specify a model, e.g. choose to use ‘random forest’. For this non-grid-search scenario, we’re going to also say this is where tuning occurs.

Cross Validation

Now we cross-validate.

You know the idea: take the rest_of_data, partition it into k folds (partition). For step j[j]_train_set (all the data but the jth partition) and a [j]_test_set (the jth partition). Train on the training set, test on the test set, record the test performance, and throw away the trained model. Do this for all k partitions.

Or, more likely, have sklearn do it for you.

Go ahead, we’ll wait.

Now we have k data points of “sample performance” of our specified model.

We inspect these results by looking at the mean (or median) score and the variance. This is the key to why we cross validate.

Why We Perform Cross Validation:

We want to get an idea of how well the specified model can generalize to unseen data and how reliable that performance is. The mean (or median) will gives us something like expected performance. The variance gives us an idea of how likely it is to actually perform near that ‘expected’ performance.

I’m not making this up–you can look at the sklearn docs and they, too, look at the variance. Some people even plot out the performances.

If you’re coming from a traditional analytics or statistics background, you might think “don’t we have theories for that? don’t we have p-values for that? don’t we have modeling assumptions for that?” The answer is, generally, no. We don’t have the same strong assumptions around the distribution of data or the performance of models in broader machine learning as we do in, say, linear models or GLMs. As a result, we need to engineer what we can’t theory [sic]. Cross validation is the engineered answer to “how well will this perform?”

Assuming the performance measures are acceptable, we’ve now found a specification of a model we like. Huzzah! (Otherwise, go back and specify a different model and repeat this process.)

Note: we did not get a trained model; we got a bunch of trained models, none of which we will actually use and all of which we will immediately discard. What we found was that the “specified model”, e.g. random forest, was a good choice for this problem.

Get the Performance Estimate

Armed with a specified model we like, we train this specified model on the entirety of rest_of_data. Sweet.

Now we load the holdout_data and run it through the feature engineering pipeline. We test our trained-on-rest-of-data specified model on the holdout_data. This gives us our performance estimate.

We hold a silent sort of intuition about performance at this step because rest_of_data is necessarily larger than any training set used during cross validation.

The intuition is that the specified model trained on this larger set would not have higher variation in performance and on average will perform at least as well as the average performance seen during cross validation. However, to check this we would need to repeat the steps from “First partition of Data” through this one multiple times.

NOTE: We do not assume it will perform at or better than average performance seen in cross validation. That is a very different assumption.

NOTE: we did not get a trained model; we got a trained model which we will immediately discard. What we found was a better estimate for in-production performance of the “specified model”, e.g. random forest.

Train Your Final Model

Assuming our performance estimate is sufficient, now we train the specified model on the entire_dataset. This is the trained model we use for production and/or decision making. This is our final model.

Monitoring & Maintenance

Until, as always, we iterate.

Hope this gave a little more insight to cross-validation in practice!

The Impact Hypothesis: After Thoughts

I recently wrote a piece on the impact hypothesis–that guess we make about how we turn the output of a data science project into impact. The tldr (but, you know, actually do read it) is that successful data science projects often fail to produce impact because we assume that they will. And that needs to change.

The Idea, in brief

Too often it’s taken for granted that if the algorithm is performing well then the business will improve. However, there’s almost always a step we need in order to take data science output (e.g. predicting churned customers) and create impact (e.g. fewer churned customers).

The step in between is the how we transform data science output into impact (e.g. targeting those predicted would-churn customers with promotions). It’s often assumed the how will happen. But if your assumption proves false, even amazing data science can not be impactful.

Instead of assuming the how will happen, we should explicitly state it as a hypothesis, critically scope it, and communicate it to all stakeholders.

This task should generally fall to the project manager or a nontechnical stakeholder–but its results should be shared with nontechnical and technical team members alike (that includes the data science team!).

If you incorporate this into your project scoping process, I guarantee you’ll have much more impactful projects and a much happier data science team.

So how come you’ve never hear of it?

At this point you may be wondering, if the impact hypothesis is so important why is it so often overlooked? And also, why have you never heard of it?

We conflate resource allocation with importance. The data science part of the project is the part that demands the most investment in terms of time, personell-power, and opportunity costs. Because we allocate it those resources, we also tend to give it all the attention during planning as well.

In reality, planning and scoping should be dedicated according to how critical something is to the success of a project. (Of course, don’t take longer than is need to accomplish the task. Dur.) We know that if the impact hypothesis fails, the project can not succeed. Therefore it easily merits a dedicated critique.

The impact hypothesis is implied. This is a problem for many reasons. The biggest is that implicitness removes ownership. So often the impact hypothesis goes unstated, leaving it both nebulous and anonymous.

The hypothesis needs to be made explicit so that it can be challenged, defended, and adjusted. Without a clear statement, the how goes without critique because there is nothing to be critiqued.

If someone does challenge an unstated hypothesis, it is likely to become a shapeshifter of sorts; one that morphs slightly whenever a hole is identified, as hands are waved about it, dismissing and dodging valid concerns.

Without ownership there is no one to come to when a flaw in the hypothesis is uncovered. Just the act of writing down the hypothesis will clarify what it is and where it needs to be vetted.

The hypothesis is assumed to be correct. This is in part a result of attribution issue. Having no notion of ownership often implies its ‘common knowledge.’ That is, a lack of ownership implies no one owns the idea because it’s so obvious correct. We should accept ‘obvious’ for an impact hypothesis. It’s too critical to the project.

Sometimes the hypothesis is assumed to be correct because it appears to be straightforward or trivial. Unfortunately, the appearance of simplicity often belies an idea that is no simpler to execute than those that are clumsy to describe.

Even if the hypothesis really is simple, it still needs to be challenged. This hypothesis is the only thing that connects the data science project to the business impact. It’s the only thing that justifies devoting the resources and personnel to a major project. Certainly it merits its own scoping.

It wasn’t named. The step didn’t have a name. That shortcoming is in part because we’re all still figuring out this new(ish) data science thing. This idea isn’t novel–nontechnical scoping is already a part of effective cross-team collaboration. Naming it is just a way to acknowledge that nontechnical scoping it is also critical in data science projects.

Now go forth and hypothesize!

Talk the Talk: Data Science Jargon for Everyone Else (Part 1: The Basics)

Bite-sized bits of data science for the non-data scientist

Disclaimer: All * terms to be defined at a later point. As well as many others

data scientist: a role that includes basic engineering, analytics, and statistics; often builds machine learning models

- depending on the company, might be a product analyst, research scientists, statistician, AI specialist, or other

- a job title made up by a guy at Facebook and a guy at LinkedIn trying to get better candidates for advanced analytics positions

in a sentence: We need to hire a data scientist!

data science: advanced analytics, plus coding and machine learning

- producing insights for decision makers or putting models into production*

- 80% cleaning data, 20% data sciencing

in a sentence: We need to hire a data science team!

artificial intelligence: the ability for a machine to produce inference from input without human directive

- used to describe everything from basic data science to self driving cars to Ava’s Ex Machina

- no one aggress a definition

- “AI is whatever hasn’t been done yet.” ~ Douglas Hofstadter

in a sentence: We just got our AI startup funded–join our team and become our first data scientist!

machine learning: algorithms and statistical models that enable computers to uncover patterns in data

- claims a large part of old school statistics as its own, plus some fun new algorithms

- it’s probably logistic regression. or a random forest….or linear regression.

- AI if you’re feeling fancy

in a sentence: We need you to machine learn [the core of our startup].

model: a hypothesized relationship about the data, usually associated with an algorithm

- may be used to refer to the algorithm itself, the relationship, a fit* model, the statistical model, maybe the mathematical model, perhaps Emily Ratajkowski, certainly not a small locomotive

- it’s probably logistic regression. or random forest….or linear regression.

in a sentence: I’m training a model. (and not how to turn left)

feature: an input to the model; x value; a predictor; a column of input data

- when a data scientist is ‘engineering,’ this is usually what they’re making

- if your model says that wine points predicts price, then sommeliers’ ratings is your feature

in a sentence: Feature engineering will make or break this model.

label: the output of a predictive model; y value; the column of output data

- the thing the startup got funded to predict